We don’t see reality clearly. We all think we perceive reality as it is. And the truth is, that’s just not the case. The brain can’t see reality as it is; it predicts reality. Right now, your brain is absorbing 11 million bits of information—the light entering your eyes, the sound of my voice, the ambient temperature of the room.

That’s the equivalent of reading War and Peace every second, twice. However, your conscious attention can only process 50 bits. That’s one sentence per second. You are only consciously aware of 0.000045% of reality entering your brain.

How does the brain make sense of all this? It predicts reality. We all live in a simulation inside our own minds. Our reality is filtered based on our beliefs. Study after study shows how people can observe the exact same event and see something completely different.

If you’re on a diet, you see food as larger. If you’re afraid of heights, you see distances as further. Watch a football game: the ref makes a call, and fans of one team see it as absolutely correct, fans of the other team see it as ridiculous. Think about geopolitics: people committed to the belief that one side is right see every event through that lens. We do not see reality clearly. We do not see people clearly. We see others as we believe they are.

_______________________

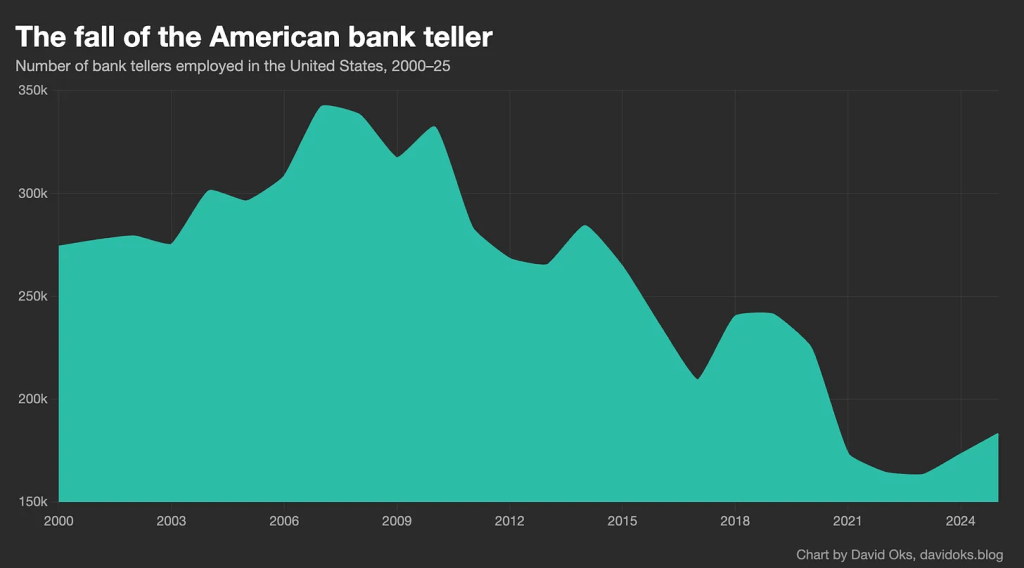

People often cite that the invention of the ATM did not reduce bank teller employment, which actually increased steadily until the late 2000s. But they miss the second half of the story, which is that another technology did – the iPhone.

Labor substitution is about comparative advantage, not absolute advantage: the relevant question for labor impacts is not whether AI can do the tasks that humans can do, but rather whether the aggregate output of humans working with AI is inferior to what AI can produce alone.

Given the vast number of frictions and bottlenecks that exist in any human domain—domains that are, after all, defined around human labor in all its warts and eccentricities, with workflows designed around humans in mind—we should expect to see a serious gap between the incredible power of the technology and its impacts on economic life.

That gap will probably close faster than previous gaps did: AI is not “like” electricity or the steam engine; an AI system is literally a machine that can think and do things itself. But the gap exists, and will exist even as the technology continues to amaze us with what it can now accomplish.

The true force of a technology is felt not with the substitution of tasks, but the invention of new paradigms. When a technology automates some of what a human does within an existing paradigm, even the vast majority of what a human does within it, it’s quite rare for it to actually get rid of the human, because the definition of the paradigm around human-shaped roles creates all sorts of bottlenecks and frictions that demand human involvement.

It’s only when we see the construction of entirely new paradigms that the full power of a technology can be realized. The ATM machine substituted tasks; but the iPhone made them irrelevant.

______________________

Everyone is panicking about the death of reading. This narrative has a seductive simplicity. Screens are destroying civilization. Children can no longer think. We are witnessing the twilight of the literate mind.

I spend my working life in a university library, watching how people actually engage with information. What I observe doesn’t match this narrative. Not because the problems aren’t real, but because the diagnosis is wrong.

Consider a simple observation. The same person who cannot get through a novel can watch a three-hour video essay on the decline of the Ottoman Empire. The same teenager who supposedly lacks attention span can maintain game focus for hours while parsing a complex narrative across multiple story lines, coordinating with teammates, adapting strategy in real time. That’s not inferior cognition. It’s different cognition. And the difference isn’t the screen. It’s the environment.

Peer-reviewed research demonstrates that social media platforms exploit variable reward schedules, the same psychological mechanisms that make gambling addictive. Users don’t know what they’ll find when they open an app; they might see hundreds of likes or nothing at all. This unpredictability acts as a powerful reinforcement signal (often discussed via dopamine ‘reward prediction error’ mechanisms), keeping people checking habitually. This isn’t because screens are inherently attention-destroying. It’s because the dominant platforms have been deliberately engineered to fragment attention in service of advertising revenue.

We have been here before. Not just once, but repeatedly, in a pattern so consistent it reveals something essential about how cultural elites respond to changes in how knowledge moves through society.

- Ancient Greece – Socrates worried that writing itself would produce forgetfulness

- 1533 – Thomas More denounced Protestant texts as deadly poisons threatening to infect readers.

- 17th–18th Centuries – People considered literacy spread to the general population as corrupting

- Late 18th/Early 19th Century – Novel-reading was treated as an existential threat

- Mid-To-Late Victorian Era – Penny Dreadfuls were condemned as morally corrupting

- 20th Century – Comic books, radio, and television

I used to believe, as I was taught, that literacy was primarily about decoding text. But watching how people actually learn and think has convinced me that literacy is about something deeper: the capacity to construct and navigate environments where understanding becomes possible.

Consider those who flourish with audio books but struggle with printed text. For years, educators told them they had learning disabilities, by which they meant: disabilities that prevented learning through the one true method we recognize. But they don’t have learning disabilities. The instruction has a disability – it can’t accommodate different neurological architectures. Give them the same text as audio, and suddenly the ‘disability’ vanishes.

The pattern I observe repeatedly: people who ‘can’t focus’ on traditional texts can maintain extraordinary concentration when working across modes.

We haven’t become post-literate. We’ve become post-monomodal. Text hasn’t disappeared; it’s been joined by a symphony of other channels. Your brain now routinely performs feats that would have seemed impossible to your grandparents. You parse information simultaneously across text, image, sound and motion. You navigate conversations that jump between platforms and formats. You synthesize understanding from fragments scattered across a dozen different sources.

The real problem isn’t mode but habitat. We don’t struggle with video versus books. We struggle with feeds versus focus. One happens in an ecosystem designed for contemplation, the other in a casino designed for endless pull-to-refresh.

_________________________

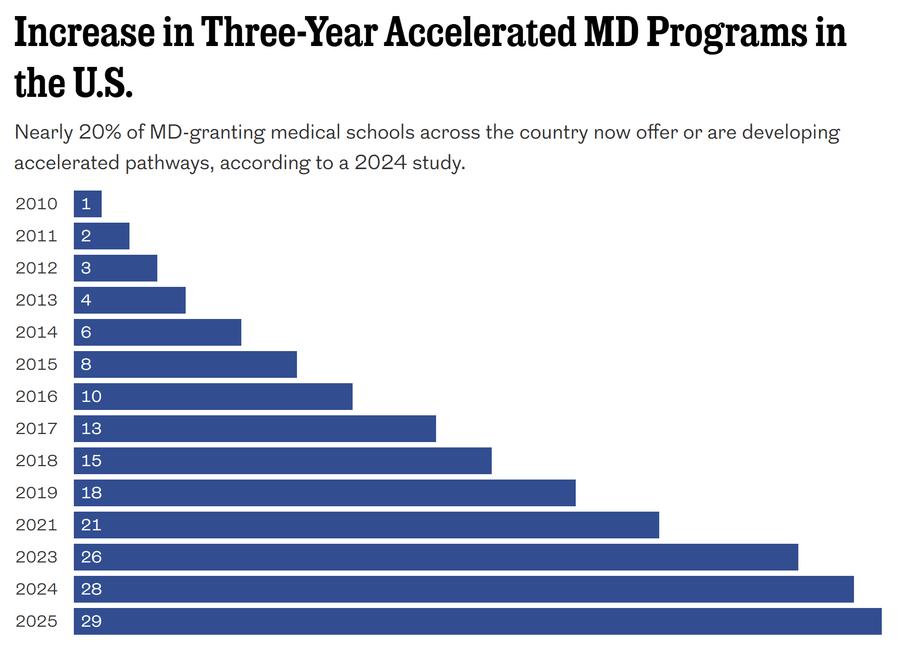

A.I. should allow medical schools to rethink whether 4 years is still necessary. If students can focus more on clinical practice and less on memorizing the Krebs cycle and molecular bio, many programs could eliminate a year, reducing both costs and physician shortages.

Drexel joins roughly 20% of med schools with or are developing 3-year programs for certain specialties, like family and internal medicine and pediatrics. The accelerated path can make these historically hard-to-staff specialties more appealing to students.